Would you want to rely a home inspector’s analysis of just the outside of a new home you’re considering for purchase? What about a lab tech only running a partial CT scan or the radiologist analyzing only part of your MRI when your health is on the line? Well, personal finance and livelihood aside, you’re doing the same thing if you’re only testing your Web applications from an outsider’s perspective. If you’re going to find everything that matters you have to test your applications as both an untrusted outsider as well as a trusted user.

Would you want to rely a home inspector’s analysis of just the outside of a new home you’re considering for purchase? What about a lab tech only running a partial CT scan or the radiologist analyzing only part of your MRI when your health is on the line? Well, personal finance and livelihood aside, you’re doing the same thing if you’re only testing your Web applications from an outsider’s perspective. If you’re going to find everything that matters you have to test your applications as both an untrusted outsider as well as a trusted user.

Interestingly, I often see people running unauthenticated scans because they just want a quick view of what “the bad guys” can see and exploit. This is often done in the name of a business partner request or compliance checkbox. What they’re overlooking though is the fact that external attackers may already have access to user credentials. Be it credentials that have been gleaned from things such as wireless network sniffing, a lost/stolen laptop or smartphone, or password cracking against the application itself, the vulnerabilities may well be there waiting for someone with ill intent to exploit.

By vulnerabilities, I mean things such as:

• XSS

• SQL injection

• CSRF

• Application logic flaws

• Login mechanism flaws (including flaws related to intruder lockouts, privilege escalation and multi-factor authentication)

Sure, if a user (or external attacker) has login credentials into the application, it can be argued that anything’s fair game. That’s not always the case, especially when it comes to user accounts with lower privileges. Standard user accounts often have limited rights in and around the application – that is until you find a big vulnerability like the ones I’ve listed above.

It’s not always reasonable, but to the extent possible you really should run your scans using login credentials at all user role levels. If it’s not practical then at least run your scans using a login account that’s representative of most users as well as an administrator-level user and expand out from there. You’ll likely be surprised at what you find – especially when it comes to cloud-based applications running in a multi-tenancy configuration. It can get ugly in a hurry.

One important thing to keep in mind when running authenticated scans is: how do you know your authentication worked and that all the necessary pages were crawled and tested? Furthermore, what if the authentication worked but the user account was locked or the application threw an exception during the scan and didn’t allow the scan to complete? Everything may have looked “normal” but you could have a false sense of security if everything wasn’t tested.

Sure, manual analysis is critical here but you cannot rely on that alone to crawl and test every single page for every possible flaw. A combined approach is the best method. You still need to ensure that your authenticated crawls and scans are working. The reality is when performing an authenticated scan there’s an assumption that everything has been tested. Just know that’s not always the case so make sure you have the right people on board (i.e. developers and QA staff) to help review your scan results and ensure that you’ve poked and prodded in all the right areas.

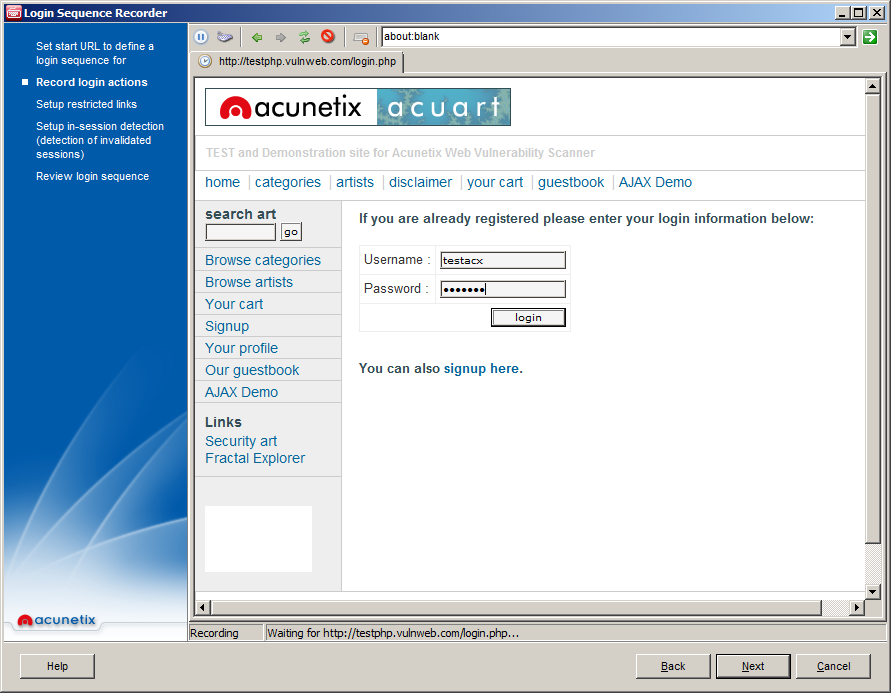

Another thing regarding authenticated scans, form-based login pages can be a real pain. I’ve found that some tools are better at recording and executing login macros than others. Regardless, seat time is one of the best ways to ensure your authentication is working. That is, using your scanner as much as possible (even on vendor test sites) and getting to know and understand the nuances of how it behaves when recording and running login macros.

So, test with authentication. Unless and until you do there’s no true way to know for sure what’s there for the taking.

Get the latest content on web security

in your inbox each week.