Note: This article refers to an older version of Acunetix. Click here to download the latest version.

Nowadays, more and more people are using URL rewrite techniques to increase their “friendliness” to both users and search engines. With URL rewrites, a URL like http://www.site.com/cms/product.php?action=buy&id=1 is typically rewritten to something like:

http://www.site.com/buy/1.

Prior to Acunetix Web Vulnerability Scanner version 8 (WVS 8 ) we had two ways to deal with this type of situations:

- We could install AcuSensor and the sensor will automatically detect URL rewrites and inform the scanner about the real filenames and parameters.

- We could define URL rewrite rules (either by importing them from .htacess/httpd.conf or by manually add them).

Acunetix WVS 8 introduces a new feature to deal with rewritten URLs. It’s called Path Fragments. In WVS 8, the crawler will automatically parse URLs and try to detect if they are rewritten. In case they are rewritten, it will split them into path fragments and create input schemes for them. The Acunetix script engine will work with these input schemes and manipulate each of them looking for vulnerabilities.

To demonstrate this feature, I have prepared a small website that is using URL rewriting. The URL rewrite rules were defined using a .htaccess file. The URL rewrite rules are listed below.

RewriteEngine on RewriteRule Details/.*/(.*?)/ details.php?id=$1 [L] RewriteRule BuyProduct-(.*?)/ buy.php?id=$1 [L] RewriteRule RateProduct-(.*?).html rate.php?id=$1 [L]

We’ve defined three rewrite rules. Here are some sample URLs

With these URL rewrite rules, the URL contains the description of the product and the product ID, as shown below.

![]()

![]()

The product ID is contained after the BuyProduct keyword.

![]()

The URL looks like a basic HTML file, though it is not. The product ID is contained inside the actual file name. Let’s see what is happening when we crawl this website with Acunetix WVS 8.

The Acunetix crawler created three input schemes after analyzing the URLs from this website.

- An input scheme for the RateProduct URL. As you can see, the URL was split in three parts (a prefix /, one path fragment and one suffix .html). It generated 3 variations for this scheme and it will manipulate all the combinations from these variations.

- An input scheme for the BuyProduct URL. Same situation One path fragment, 3 variations.

- An input scheme for the Details URL. In this case there are two path fragments and 3 variations.

These input schemes are later sent to the script engine so the security scripts will test all the possible combinations and generate URLs like:

- /Mod_Rewrite_Shop/BuyProduct-1%27%22/ – test for SQL injection (%27%22 is ‘” URL encoded)

- /Mod_Rewrite_Shop/BuyProduct-2%27%22/

- /Mod_Rewrite_Shop/BuyProduct-3%27%22/

- /Mod_Rewrite_Shop/RateProduct-1%27%22.html

- /Mod_Rewrite_Shop/Details/web-camera-a4tech/1%27%22/

- and so on …

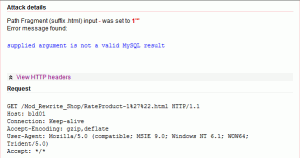

Because the path fragments do not have names like normal GET/POST/COOKIE parameters, it’s not possible to name them so you need to view the HTTP headers to see exactly where the vulnerability is present. An SQL injection in a path fragment looks like this:

These type of vulnerabilities in path fragments are pretty common nowadays, but until now it was not possible to automatically check for and detect them. By intelligently generating path fragments from all the URLs found on the scanned website, the Acunetix Web Vulnerability Scanner version 8 is able to find such vulnerabilities.

Get the latest content on web security

in your inbox each week.

Comments are closed.